Neutrinos are different from other particles: they barely interact with other matter, leaving no trace of their passage. When they travel through space, neutrinos can change their flavour (changing from one type to the other), a process called oscillation. An ideal way to measure the neutrinos’ properties is to produce a neutrino beam, and measure how the composition of the neutrino beam changes as the neutrinos travel hundreds of kilometres through the earth. The DUNE experiment will

determine if neutrinos and anti-neutrinos will do this in the same way.

Any difference observed in this process (called CP-violation) might help us explain why there is more matter than anti-matter in

the Universe.

Schematic of the DUNE experiment and the probability to detect neutrinos according to covered distance

The Deep Underground Neutrino Experiment (DUNE) is a long-baseline neutrino oscillation experiment. It is the flagship particle physics experiment in the US pursued by an international collaboration of almost 1000 physicists from around the world. The DUNE Experiment will use state-of-the-art Liquid Argon

Time-Projection Chamber (LArTPC) technology for the massive neutrino

detectors planned at the Sanford Underground Research Facility in South Dakota.

The UK is the largest contributor to DUNE after the host country, the US. The PPD neutrino group is providing the DAQ system for the far detector and the readout planes (APAs) to record the signatures generated by the neutrino interactions. Additionally, we are taking the lead in developing the event reconstruction software and contributing to the computing infrastructure and organisation of the experiment.

PPD INVOLVEMENTS:

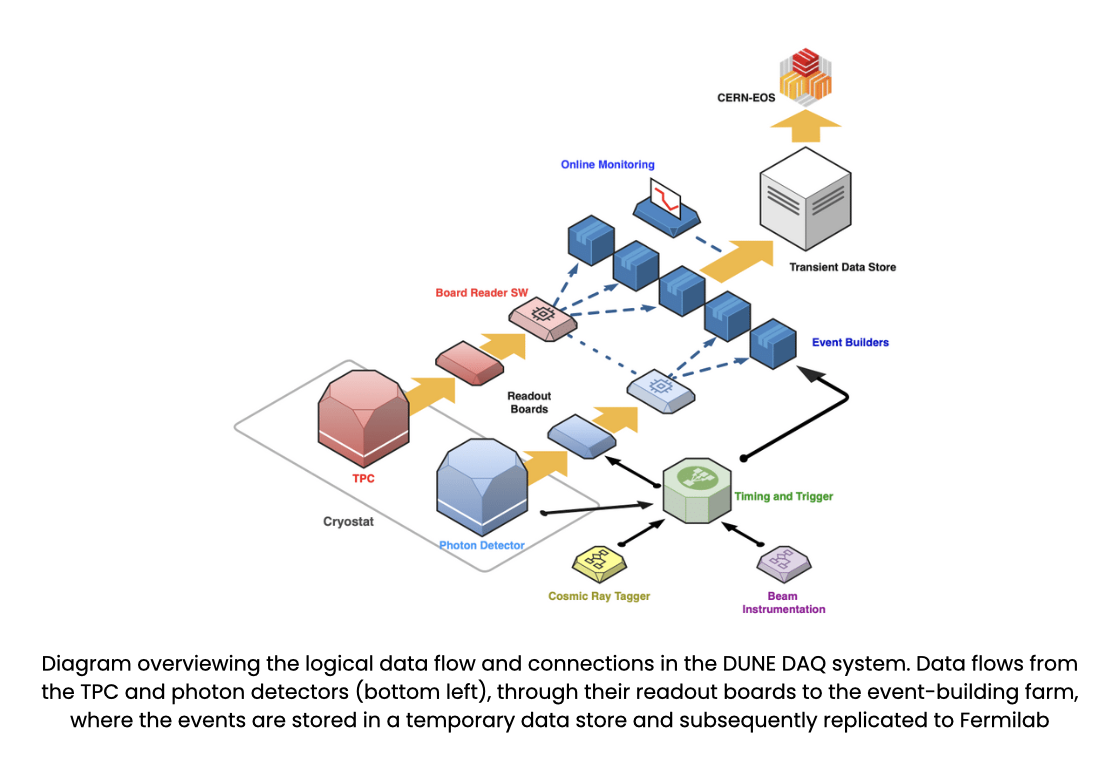

DATA ACQUISITION SYSTEM (DAQ)

The DUNE DAQ system is the largest and most complex that a neutrino experiment has ever required. Due to the challenge of the experiment location The design of the services and layout of the physical structure, such as the electronics racks, must be provided by the consortium. PPD provided the technical expertise for the design of the services and layout of the physical structure to accommodate the DAQ components, and the design of the power, cooling signal, cable distribution and fire safety systems.

A self-triggered system is required that is capable of streaming data to a memory store capable of recording 10-100 seconds of unfiltered continuous data. Furthermore, a triggered system is required for beam-initiated events since it would not be possible or sensible to record data from all channels for all beam live time. PPD has considerable experience in all of these areas: the expertise in the use of FPGAs and signal processing has been deployed to address the frontend processing element of the DAQ chain.

A major recent development is the adoption of a different technology for the second DUNE module the so call Vertical Drift module. The Technological Department (TD) has considerable experience with such systems and is working with PPD to deliver an initial ethernet readout system. The trigger primitive chain that has already been developed will be deployed within this new system.

COMPUTER PACKAGES

DUNE assigned to the PPD group the offline production management and monitoring. The DUNE production system must support complex workflows, communicate with data catalogues, and optimise the submission and execution of production jobs across the available resources.

A lot of work has gone into bringing in international resources and interfacing with middleware outside the USA. PPD helped integrate UK computing resources into the existing job submission ssystem, also providing documentation on how to submit jobs to the CERN batch system. The PPD group devised a system using ETF and CRIC to provide a single repository for all DUNE resource information, monitor the status of all DUNE sites, and spot problems before the user. It helped develop proof-of-concept projects to demonstrate that DIRAC could be used with RUCIO and SAM Data Catalog as a framework for future production management. In addition, the group showed that DUNE’s plan to use late binding would work with DIRAC.

Finally, PPD is involved in defining the future workflow system and contributed to the computing CDR 2022.